The first browser-agent bug I kept seeing was not an AI bug. It was a selector bug. The agent clicked .auth-actions button:first-child. After a redesign, that selector pointed at "Sign Up" instead of "Sign In." The agent did exactly what the code told it to do.

Browser Agent Protocol is my attempt to make that boundary explicit. An agent should not need a custom Playwright wrapper for every project. It should get a small set of browser tools: navigate, observe, click, fill, extract. The observation should be structured enough for a model to reason over, and stable enough to survive normal UI churn.

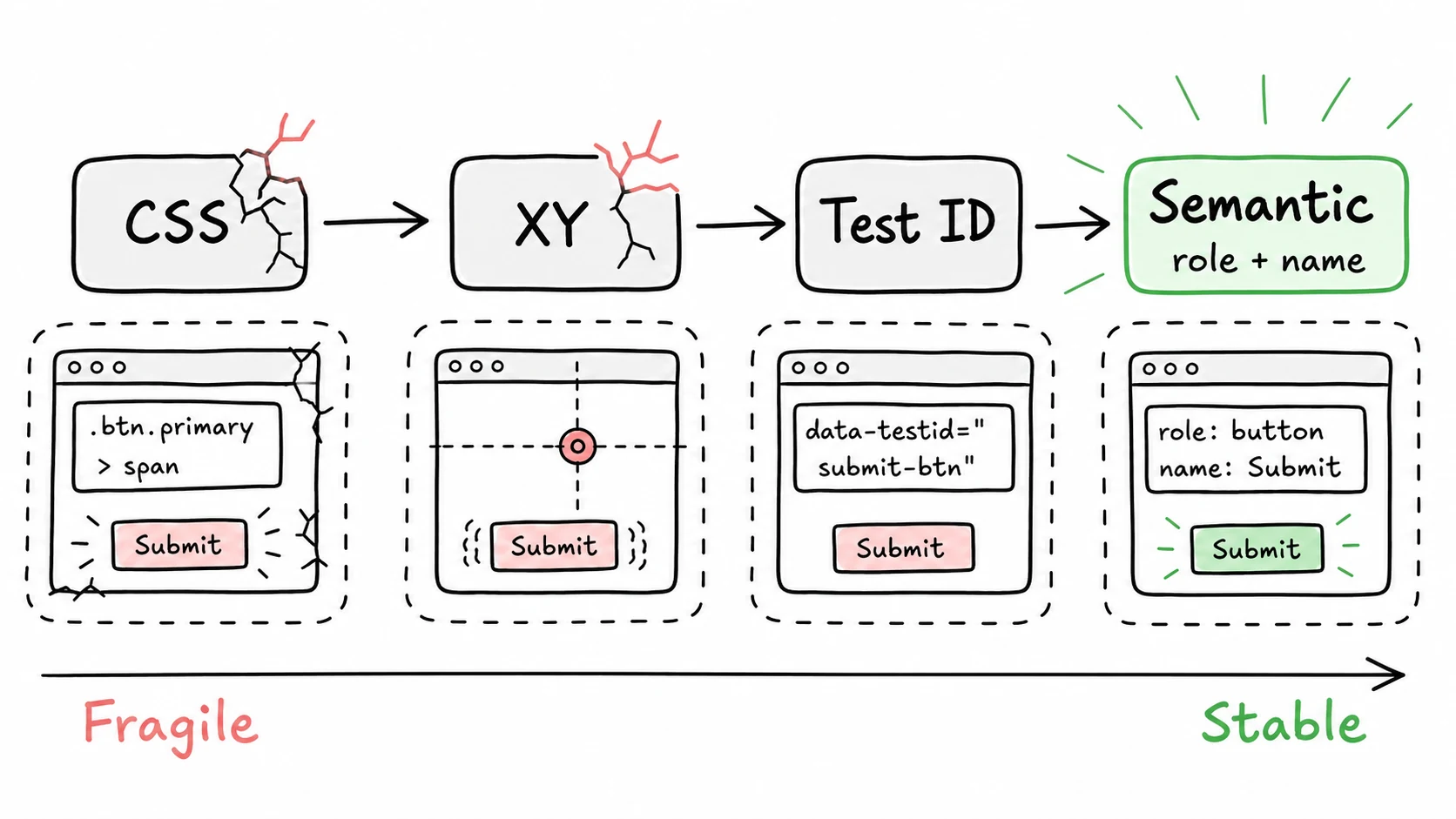

The core move is semantic selectors:

// Fragile: where the button happened to be

await page.click('.auth-form button[type="submit"]')

// BAP-style: what the button is

await browser.click({ role: 'button', name: 'Sign In' })The accessibility tree already gives browsers this information. A screen reader does not care that a button uses Tailwind classes or sits inside three wrapper divs. It cares that the element is a button named "Sign In." Browser agents need the same abstraction.

BAP exposes that through an MCP server and CLI. A typical turn is: observe the page, choose a semantic target, act, then observe again. The refs are stable inside the session, and the action log gives you something to debug when a page behaves differently than expected.

What I like about this design is that it does not pretend the web is clean. Pages have modals, iframes, hidden buttons, slow network calls, and inconsistent markup. BAP does not make those disappear. It gives the agent a narrower, more inspectable interface to work through.

The repo is public here: browseragentprotocol/bap.