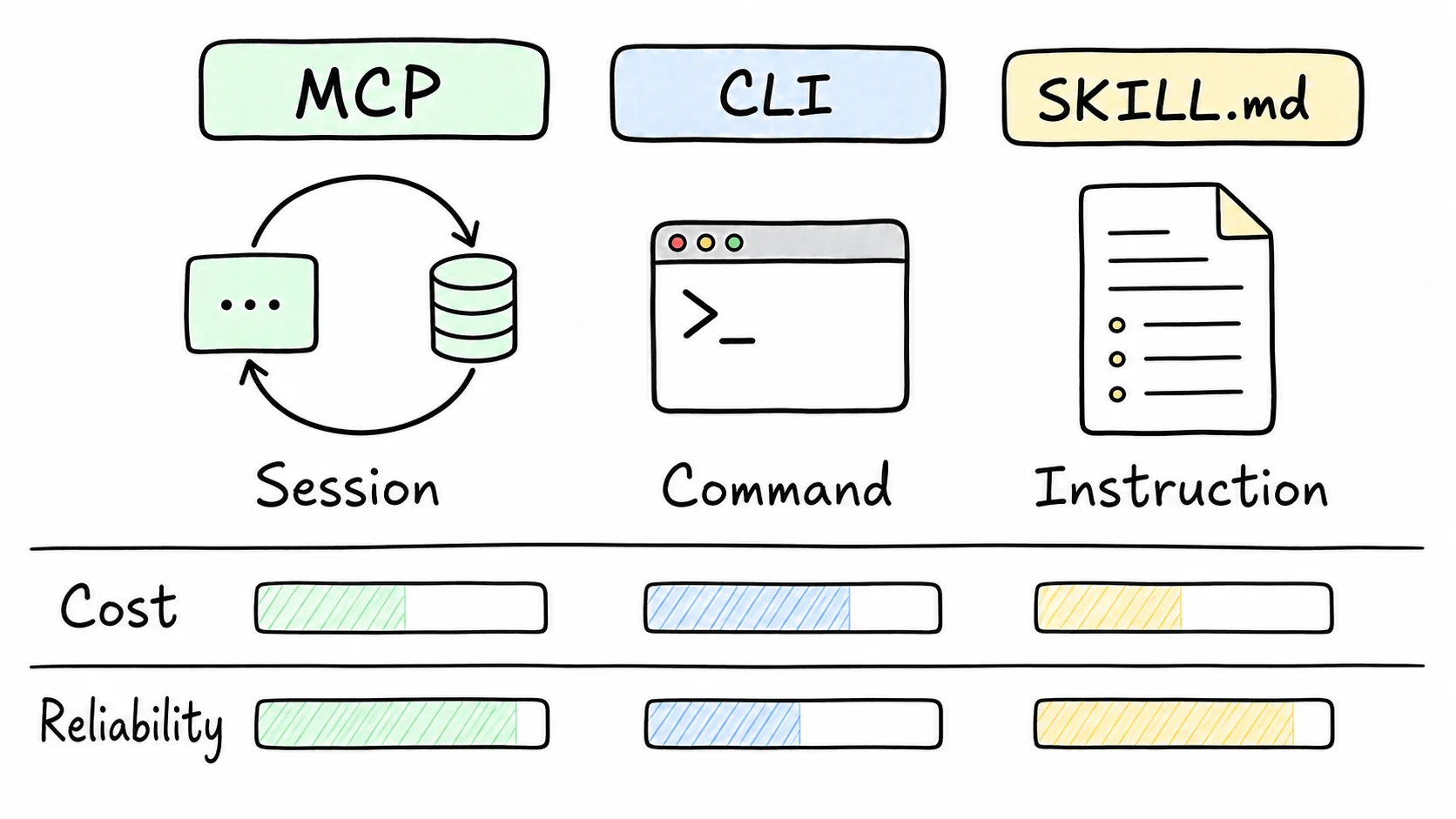

I do not think MCP, CLIs, and SKILL.md compete with each other. They sit at different layers. The mistake is picking one because it is fashionable instead of asking what kind of boundary the agent actually needs.

| Use | When I reach for it |

|---|---|

| MCP | The agent needs a typed, stateful connection to tools or data. |

| CLI | The job is deterministic, local, scriptable, and CI-friendly. |

| SKILL.md | The capability is mostly instructions, workflow, examples, or policy. |

BAP uses MCP because a browser session has state. The agent navigates, observes, clicks, fills, waits, and observes again. Those actions belong in a session with a clear protocol boundary.

skill-tools uses a CLI because the work is stateless. Parse this file. Lint this directory. Score these skills. A CLI is easy to run locally, easy to put in GitHub Actions, and easy for a human to debug with --help and exit codes.

staff-engineer uses SKILL.md-style instruction files because a lot of the value is behavioral. How should the agent review a diff? When should it ask for confirmation? What should it consider a risky file? That is workflow knowledge, not an API.

Here is the rule I use now:

- If the agent needs a long-lived connection, use MCP.

- If the agent needs to run one deterministic operation, use a CLI.

- If the agent needs to learn a repeatable workflow, write a skill.

- If humans and agents both need the same capability, consider shipping more than one interface over the same core.

The last point matters. BAP has an MCP interface for agents and a CLI for humans and CI. That is not duplication. It is the same capability presented through two useful doors.

I also try to keep token cost visible. Tool schemas, help text, and long instructions all consume context. The cheapest interface is the one that gives the agent exactly enough structure to act safely and no more.

Related: MCP in 60 seconds, skill-tools, and BAP.